" LLM text, or content generated using large language models, is not inherently bad for SEO. Google evaluates content based on usefulness, clarity, and user satisfaction, not its source. With proper human editing, LLM-generated content can enhance SEO by providing organized, valuable, and readable information."

You have likely experienced the same mild panic that everyone else is if you have recently been publishing content online. You use AI tools. Your rivals use AI tools. All of a sudden, everything seems dangerous when someone claims that Google is cracking down on LLM text. Rankings. Traffic. Have faith. It seems like a single mistake could wipe out your website.

The main source of the confusion is a misinterpretation of what Google is truly assessing. The shortened version is straightforward. Because the content originated from large language models Google does not penalize it. It assesses whether the information is beneficial to users. The rest is just noise.

Let's take our time and discuss the important factors.

What LLM text actually means

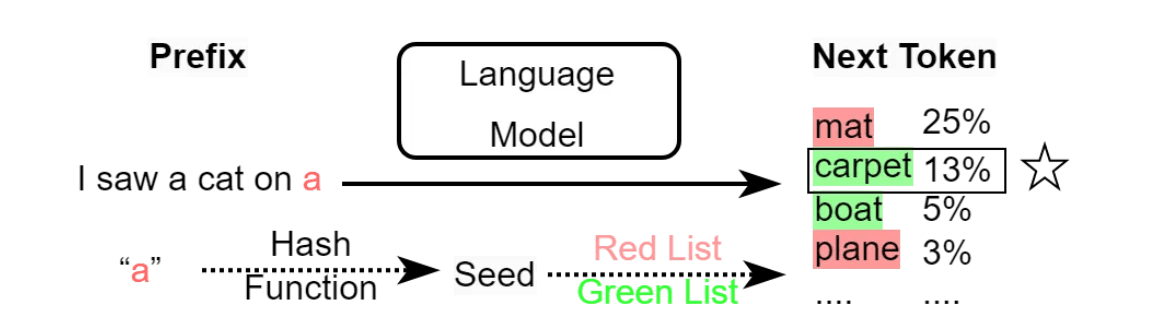

LLM text is content produced with the help of large language models. These models are trained on massive amounts of language data and learn patterns in how humans write, explain and answer questions. That is it. They do not have intent. They do not know facts on their own. They predict what text comes next based on probabilities.

When people say “AI content,” they often imagine a robotic wall of generic paragraphs. In reality, LLM text can range from raw unedited output to carefully shaped drafts that are refined by humans. Google does not see a label that says “written by a model.” It sees the final page users interact with.

Why the SEO fear started

The anxiety surrounding AI content and SEO did not appear overnight. Low-quality automated content has been around for a while. Spun articles. Soup of keywords. Pages are not made to assist anyone, but merely to rank. Long-term performance of those pages was poor, and Panda and Helpful Content updates eliminated them.

Text production at scale is now made easier by large language models. Of course, there are those who misuse that scale. When those pages don't work, the LLM text itself is held accountable instead of the way it was used.

The myth starts here.

The biggest myth about AI and rankings

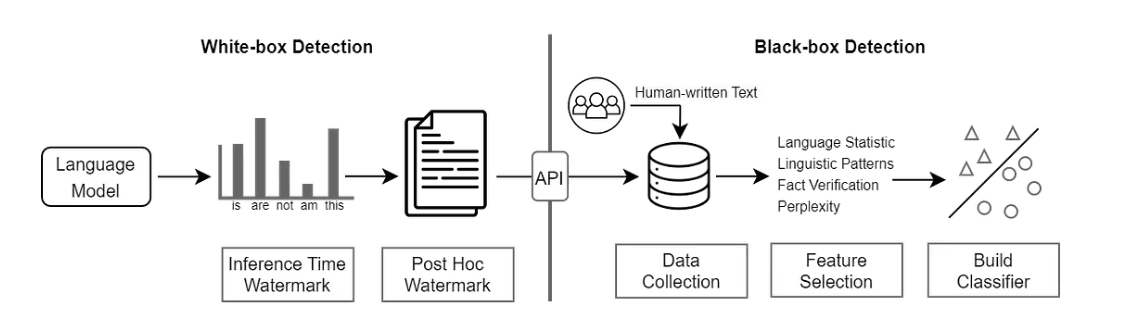

One of the most widespread misconceptions is that Google automatically identifies and rejects content produced by artificial intelligence. This is not supported by any evidence. Google has stated time and time again that quality, not production method, is the basis for evaluating content.

Content can rank if it provides a clear, accurate, and helpful context for a user's query. It suffers if it is written incoherently, repetitively or without much thought. Human-written text is no exception.

Bad content does not fail because a model was used; rather it fails because it is bad.

What Google actually evaluates

Signals that correspond with user satisfaction are the main focus of Google's systems. Relevance to the query, originality, clarity, structure, and whether the page satisfies search intent are some of these.

For instance, Google expects actionable advice when a user searches for "how to fix a slow website." Not a filler. Not superficial definitions. Regardless of the draft's creation method, pages that provide steps, explain causes and demonstrate understanding perform better.

For this reason, SEO and content are closely related. Tricking algorithms is not the goal of SEO. The goal is to surpass rival pages in meeting expectations.

Where LLM text fits when used responsibly

The best place to start is with LLM text. Models excel at creating first drafts, organizing data, and summarizing ideas. Without direction, they struggle with judgment, setting priorities, and comprehending the nuances of the real world.

Issues arise when authors treat LLM output as final content. It sounds like generic writing. The examples are not clear. It's all a little empty and safe.

The outcome is different when authors treat it as raw material. People provide context. They cut out parts that aren't needed. They bring in experience. They match the tone to the demands of the audience.

The finished product is no longer considered "AI content" at that point. AI is used as a tool in edited content.

Good vs poor LLM generated content

A poor example looks like this. An SEO page that repeats definitions, stays away from details, and states things like "it is important to focus on quality content" without providing an explanation of how or why. Although it says the right things in theory nobody benefits from it.

A good example adds structure to the same subject. It clarifies trade-offs. It displays what succeeds and what doesn't. It foresees misunderstandings and responds to follow-up queries before the reader does.

The LLM text could be the beginning of both pages. Only one shows consideration for the reader's time.

The role of human editing

Correcting grammar is not the goal of human editing. It has to do with intent alignment. Editors make decisions about what is important, what should be omitted and what needs more information. They verify the facts. They change the tone. They ensure that the page reads as though it were created for a human, not a model.

This is where the value of SEO is generated. Pages that seem purposeful and helpful are rewarded by Google. Models create opportunities. People make direction decisions.

How models are designed for question answering

By identifying patterns in a wide range of formats and explanations, LLM developers create models to manage question answering. This enables them to produce organized responses in a timely manner. It does not ensure accuracy or applicability to a particular audience.

Human judgment fills that gap. Content producers can steer model output toward clarity rather than generic coverage when they have a thorough understanding of their users.

Why production method matters less than outcome

From an SEO perspective, the outcome is what matters. Does the page solve the problem? Is it readable? Is it accurate? Is it original in how it explains the topic?

Google cannot see your writing process. It sees how users interact with the result. Scroll depth, engagement and satisfaction are far more important than whether a model helped draft the page.

A grounded takeaway

LLM text has no negative SEO effects. The content is low effort. Big language models are not quick fixes for rankings; they are tools. When used carefully, they can help improve writing. When used carelessly, they produce pages that seem interchangeable and empty.

Your content will stand alone if you concentrate on its utility, intent and clarity. Value is important to Google. The rest is incidental.

The practical advice is straightforward. Use LLMs to assist, not replace thinking. Make deliberate edits. Write for actual inquiries. When content earns attention rankings usually follow.

FAQ'S

Q1: Does Google penalize AI-generated content?

A1: No, Google does not penalize content simply because it was generated using AI or LLMs. The focus is on quality, usefulness, and whether it meets user intent.

Q2: Can LLM text help with SEO?

A2: Yes, when used responsibly, LLMs can assist in drafting, organizing, and summarizing content. Human editing ensures the content is accurate, clear, and user-focused.

Q3: What makes LLM content “good” for SEO?

A3: Good LLM content provides detailed explanations, actionable advice, and addresses reader questions effectively. It is structured, original, and edited to match the audience’s needs.

Q4: Should I rely solely on LLMs for content creation?

A4: No, LLMs should be used as a tool, not a replacement for human judgment. Human input ensures accuracy, context, and engagement, which are critical for SEO success.

Q5: What factors does Google actually evaluate for content ranking?

A5: Google evaluates relevance to user queries, originality, clarity, structure, readability, and whether the content satisfies search intent.